As AI compresses decisions into milliseconds, the designers who thrive will be the ones who know when to slow things down — and why trust demands it.

We’ve spent a decade worshipping speed. But as AI compresses action into milliseconds, something unexpected is happening: the faster our products become, the less we trust them.

Spend five minutes in a typical product strategy session and you’ll hear the same mantra: eliminate friction, reduce clicks, make the experience invisible. For a decade, friction has been treated as a defect to eliminate. We’ve optimized and streamlined until intent and execution are separated by milliseconds.

When the outcome matters — money, health, security, speed alone no longer signals competence. It can signal carelessness.

The wealth management paradox

Think about how wealth management works today. There are “disruptors” like Robinhood, Cash App, and Revolut on one side. These platforms have reduced complicated market moves to a “swipe-to-complete” loop that delivers a dopamine rush. They are works of UX art, engineered for ease of entry.

On the other hand, there are the “legacy” giants: Morgan Stanley, Charles Schwab, and Fidelity. The tech elite often dismiss these institutions because their verification processes are long and their interfaces feel “heavy.” But in places where money is important, and risk is ever-present, those same qualities are perceived as signs of seriousness, not bugs.

This creates a psychological whiplash: we want to buy a pair of sneakers on Amazon with a single tap, but we want our financial futures to feel like they require a key, a vault, and a heavy door. We’ve been told that “speed is king,” but when it comes to our life savings, we find ourselves searching for the brakes.

This contradiction isn’t random. It follows a predictable pattern. Where your product sits on two key dimensions determines whether speed builds confidence or erodes it.

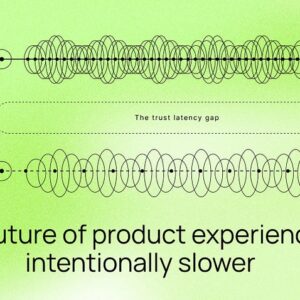

The trust-latency gap

The Trust-Latency Gap is the distance between how fast a system can execute a decision and how long a human needs to feel confident about it. As AI closes the execution gap to near-zero, that psychological distance only grows.

This framing extends well beyond fintech. As LINC Interaction Architects argue in Beyond Usability: Designing UX for Trust in the Age of AI, usability is no longer the bar — trust is. Getting the job done quickly is table stakes; making users feel confident in the system doing it is the harder, more important problem.

The matrix maps any product interaction across two questions: How significant is the consequence? And how easily can the user undo it? Actions that are low-impact and reversible (saving a draft, liking a post, adding to a wishlist) should be frictionless. Actions that are high-impact and irreversible (transferring funds, submitting a legal agreement, deleting an account) warrant visible deliberation. The two middle quadrants are where most design judgment lives: high-impact but reversible actions like portfolio rebalancing benefit from Visible Guardrails that show process without blocking speed, while low-impact irreversible actions like a public comment need only a Gentle Nudge, a light confirmation that registers consequence without creating anxiety.

The Reversibility-Impact Matrix reveals why the wealth management paradox exists. Robinhood technically operates in the “high impact + reversible” zone; you can sell your stocks, undo your trades. But users experience it as permanent. A $50K trade feels psychologically irreversible in the moment, even if it’s technically not. That mismatch between technical reversibility and psychological permanence is what creates the unease.

Legacy firms like Charles Schwab, meanwhile, design for the perception, not just the reality. Their multi-step verifications aren’t there because the technology requires it. They’re there because a $50K decision should feel like it demands careful handling: visible steps, deliberate checkpoints, a moment of pause before anything becomes final.

When it doesn’t feel right, right away

But a strange thing happens when the stakes get high. Picture this scenario: You’re about to execute a $50K cryptocurrency trade on a fintech platform. The transaction processes in 0.3 seconds. Confirmation appears. But instead of relief, you feel… unease. Should something this significant happen this quickly?

Or think about getting medical advice from an AI. You describe your symptoms to a diagnostic algorithm. Within seconds, it returns a detailed analysis and treatment recommendation. The speed is impressive. The technology is sound. Yet something about the instantaneous response feels hollow, almost dismissive.

This isn’t a fear of technology or a longing for simpler times. Machines operate at computational speed; humans process risk emotionally and cognitively. Trust has its own processing speed, and it’s far slower than our algorithms.

The labor illusion: why effort matters

Behavioral economists have documented what they call the Labor Illusion, the phenomenon where we value things more when we can perceive the work being done. It explains why users instinctively trust a loan application that asks detailed questions and takes several minutes over an identical one that approves in seconds. The perceived effort signals competence, even when the outcome is the same.

Research by Ryan Buell and Michael Norton at Harvard Business School (Management Science, 2011) found that users rated travel booking sites more favorably when the interface showed a progress indicator, even when results had already loaded. The illusion of computational effort built trust; removing it made identical results feel arbitrary.

The implications for UX design are significant. We’ve spent years optimizing away every perceivable moment of “work” from our interfaces. We’ve hidden the complexity, masked the processing, and eliminated the wait. In doing so, we may have eliminated the very signals that make users feel confident in the outcome. The case for designing friction as a tool rather than a defect has been building for years — the field is only now catching up to it.

Strategic friction: a new framework

As AI begins to automate our workflows at a pace that exceeds our ability to process them, the “straight line” from user to outcome is becoming dangerous. The most valuable designers in this new era won’t be the ones who shave another second off a loading state. They will be the ones who know exactly where to build a “speed bump,” the ones who understand that in a world of instant AI results, Strategic Friction is the only bridge to human trust.

Strategic Friction isn’t about making bad UX or artificially degrading performance. It’s about the intentional, expert calibration of interaction speed to match psychological needs. It’s recognizing that different contexts demand different temporal experiences. The momentum behind this idea is growing — Ian Araujo’s recent exploration of intentional friction in security and usability is one signal that the design community is arriving at the same conclusion from multiple directions.

Consider how many AI chat assistants reveal their responses. Rather than delivering answers instantaneously, the text streams word by word, a design pattern common across conversational AI tools. Whether deliberate or convention, the effect is real: it builds anticipation, signals “thinking,” makes the AI feel collaborative rather than omniscient, and gives users time to process information as it arrives.

Or look at how some virtual health platforms have begun adding “analysis periods” where the interface shows the AI cross-referencing medical databases and reviewing diagnostic criteria. The actual computation happens in milliseconds, but the visualization period dramatically increases patient confidence in the recommendation. Research in explainable AI (XAI) for clinical settings consistently shows that when diagnostic tools make their reasoning visible, surfacing which criteria were weighted and which data points were reviewed, both clinicians and patients report higher confidence in the recommendations and stronger follow-through on treatment plans. The delay isn’t deceptive; it’s translating machine-speed processing into human-speed trust-building.

Applying the framework:

Before calibrating friction for any interaction, ask three questions:

1. What’s the impact? If this action goes wrong financially, medically, socially, or legally, how significant is the damage?

2. How reversible is it? Can the user undo it in seconds, or is the outcome permanent the moment they confirm?

3. What does the user perceive? Even if an action is technically reversible, does it feel permanent to the user in the moment?

If impact is high and reversibility is low or perceived as low, design for deliberation. If both are low, design for speed.

Where friction creates value

The art of Strategic Friction lies in calibration. Not every interaction requires it. Your Instagram feed should still load instantly. Your text messages shouldn’t buffer unnecessarily. The framework requires understanding when speed erodes trust and when it delivers value.

Apply Strategic Friction when:

Stakes are high. Financial transactions, medical decisions, legal agreements: these warrant visible deliberation. When someone is transferring significant wealth or making healthcare choices, show them the verification steps. Let them see the security protocols. Give them a moment to feel in control of an irreversible decision.

Complexity is hidden. When AI or automation is performing sophisticated analysis invisibly, users need signals that the work is happening. A robo-advisor that instantly spits out portfolio recommendations feels arbitrary. One that shows “analyzing your portfolio across 10,000 market scenarios” builds confidence in the sophistication of the recommendation.

Trust is being established. First-time users, new relationships, unfamiliar contexts: these all benefit from interfaces that demonstrate thoroughness. A mortgage approval that happens in 30 seconds might be technically accurate, but it doesn’t feel like the gravity of the commitment has been properly acknowledged.

Decisions are irreversible. Built-in reflection moments help users more when the outcome is more permanent. Confirmations, cooling-off periods, and multi-step verifications aren’t problems; they’re ways to protect yourself from making a mistake.

The stakes extend into the physical world. Consider a smart home security system prompting the user to disable all sensors before a planned event. The AI knows the user’s patterns; it could auto-disable and restore silently. But in that context, silence feels like negligence. Users want to see the confirmation screen. They want to tap “I understand this will leave my home unmonitored for 4 hours.” The friction isn’t an obstacle to the action; it’s evidence that the system understands the weight of it. Hardware-software products face this acutely because the consequence often extends beyond the screen: a door unlocked, a camera disabled, a thermostat overridden. When a digital action produces a real-world outcome that can’t be undone with a back button, the interface needs to communicate that crossing the threshold.

Keep it fast when: Stakes are low and actions are reversible. Social media interactions, content browsing, favoriting items: these low-commitment actions should remain frictionless. Speed is the value proposition for real-time updates and messaging. Experienced users performing routine tasks benefit from efficiency, not deliberation.

Substance over performance

Strategic Friction only works when it’s honest.

There’s a meaningful difference between a delay that reflects genuine complexity (an AI that surfaces its reasoning, a confirmation screen that summarizes what’s about to happen) and a delay that simply performs seriousness without delivering it. The former builds trust. The latter is a dark pattern.

Two failure modes are worth naming explicitly.

First: artificial delay that misrepresents system activity. If a platform shows a “verifying your security settings” animation while nothing is actually being verified, it isn’t building confidence; it’s manufacturing it. When users discover the performance, and they will, the trust damage is worse than if no friction had existed at all.

Second: in crisis or time-sensitive scenarios, deliberate friction can destroy the very trust it’s meant to build. A security breach alert that requires three confirmation steps before the user can act. An emergency medical interface that adds a “reflection moment” before displaying critical results. These are not calibration failures; they’re design failures. Speed is sometimes the only signal of competence that matters.

The principle, then, is transparency. Strategic Friction calibrates experience to match genuine risk and complexity. It never invents risk to manufacture gravity.

The implementation challenge

Here’s the uncomfortable reality for product & design teams: adding Strategic Friction requires admitting that pure efficiency isn’t always the answer. For an industry that’s spent two decades optimizing for speed, conversion, and reducing time-to-task-completion, this represents a fundamental philosophical shift.

It requires research, nuance, and subjective judgment about user psychology. You can’t A/B test your way to the perfect friction coefficient in the same way you can optimize button colors. You have to understand emotional states, risk perception, and the specific anxieties your users bring to high-stakes interactions.

This demands deeper behavioral insight than traditional optimization. It means product teams need to develop new muscles, combining behavioral psychology, user research, and contextual judgment in ways that pure data optimization never required. Recent work on the psychology of trust in AI confirms this: the metrics that matter most — confidence, follow-through, post-decision regret — don’t show up in your conversion dashboard. It means having uncomfortable conversations about whether a feature that boosts conversion in the short term might erode trust in the long term.

Product teams that have introduced structured review steps before high-stakes transactions (a confirmation screen, a summary of what’s about to happen) consistently report not just higher completion rates, but fewer post-transaction support contacts and regret-driven reversals. The friction didn’t slow users down; it gave them confidence to proceed.

Designing for the AI era

Instead of asking “how fast can we make this?” we should ask “how fast should it feel?”

Pedro del Rio frames it well: designing for AI agents may be the hardest UX challenge of 2026 precisely because every layer of automation that removes a human from the loop also removes a moment of natural trust-building. The speed gains are real. The confidence gap they create is equally real.

The wealth management paradox we opened with isn’t really a paradox at all; it’s users telling us exactly what they need. They want efficiency where it adds value. They want deliberation where it builds confidence. They want speed for the mundane and substance for the significant.

The designers who thrive in 2026 and beyond will be the ones who can read this distinction, who understand that sometimes the best user experience includes a three-second pause that communicates: “We’re taking this seriously. You should too.”

The path forward

Strategic Friction represents a maturation of UX design principles. We’ve mastered speed. We’ve conquered conversion optimization. Now we need to master calibration: the subtle art of matching interaction tempo to psychological need.

This doesn’t mean abandoning everything we’ve learned about good UX. Remove unnecessary steps. Eliminate actual friction: the accidental complexity, the poor information architecture, the confusing navigation. But once you’ve streamlined the experience to its essence, ask yourself: In this specific context, with these specific stakes, what does trust feel like?

In an AI-driven world where machines operate in microseconds, designers are no longer optimizing for speed alone; they’re calibrating tempo. The future of product experience belongs to teams who know when to remove friction and when to introduce it deliberately. Trust has a processing speed. The products that endure will be the ones that respect it.

Research cited in this piece

- Ryan W. Buell & Michael I. Norton — The Labor Illusion: How Operational Transparency Increases Perceived Value of Services (Management Science, Harvard Business School, 2011)

- Markus Scholl et al. — Explainable Artificial Intelligence in Clinical Decision Support: Systematic Review (JMIR Medical Informatics, 2023)

From the design community:

- Designing Friction For A Better User Experience — Smashing Magazine

- Intentional friction: balancing security and usability in UX — Ian Araujo

- The Trust Problem: Why Designing for AI Agents — Pedro del Rio

- Beyond Usability: Designing UX for Trust in the Age of AI — LINC

- The Psychology of Trust in AI — Smashing Magazine

The trust-latency gap: why the future of UX is intentionally slower was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.