Why the industry is investing billions in better models when the real failure happens before the model ever runs

A few months ago, I watched a physician abandon an AI diagnostic tool in under 30 seconds.

She wasn’t skeptical of the technology. She’d actively asked for it. Her hospital had deployed the tool after a year-long procurement process, and she was one of the designated early adopters. When she opened it for the first time, she looked at the screen for about eight seconds, scrolled once, closed the tab, and never came back. I know this because I was running the audit that captured her session.

I’ve spent the last year auditing healthcare AI products, including one platform that had processed over 22 million consultations. What I’ve learned is something the industry is still refusing to acknowledge: the trust gap in healthcare AI isn’t about the AI. It’s about the first 30 seconds.

The industry is diagnosing the wrong problem

If you read the research on healthcare AI adoption, you’ll find a consistent story. The JMIR 2025 study of 1,762 participants found that simply mentioning AI involvement in clinical care decreased patient trust and willingness to seek treatment. A 2025 paper in Diagnostics tested a dynamic confidence scoring framework across 6,689 cardiovascular cases and found that clinician override rates dropped from 87.6% to 33.3% when the AI’s uncertainty was made visible. Lee and See’s foundational research on trust in automation, cited 4,100+ times, established decades ago that trust builds slowly from successes but collapses instantly from failures.

The consensus response to these findings has been to improve the models. Better training data. More specialized fine-tuning. Clearer clinical validation. Longer evidence documentation. And the models have gotten better. They’re genuinely impressive now. The market is projected to exceed $500 billion by 2030.

None of it has moved the adoption needle the way anyone expected.

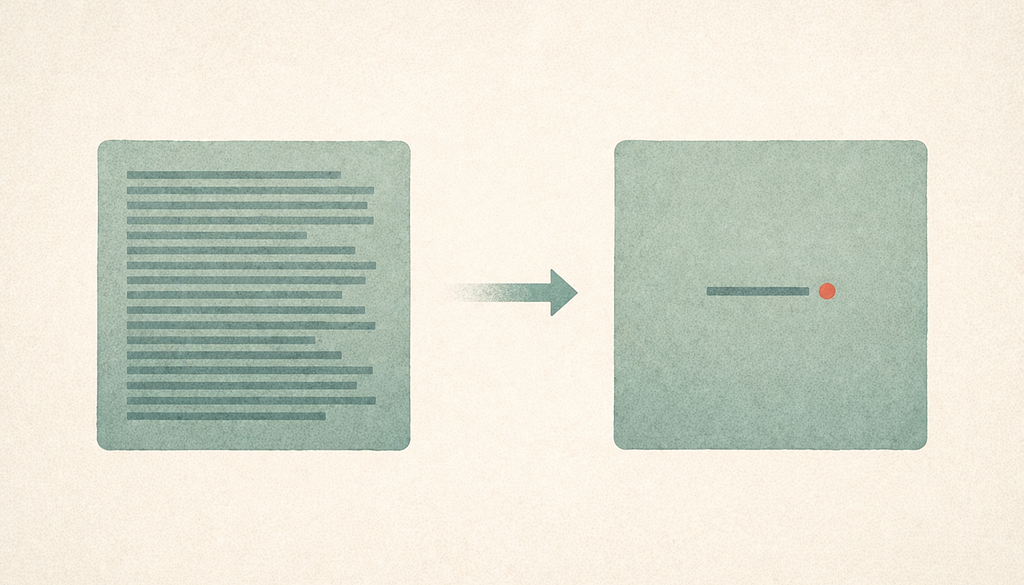

The reason is simple, and once you see it, you can’t unsee it: trust in healthcare AI is formed before the model runs. It’s formed in the layout of the first screen. It’s formed in whether the product asks for symptoms before demonstrating any value. It’s formed in how many clicks stand between the user and proof that this thing is worth their time. By the moment the AI generates its first output, the trust decision has already been made, and the model had nothing to do with it.

This isn’t a controversial claim if you’ve actually watched people use these products. But it’s a claim the industry keeps dancing around, because the alternative is uncomfortable: most healthcare AI products aren’t failing because of their models. They’re failing because of their interfaces. And fixing interfaces requires a completely different set of skills than fixing models.

A tale of two homepages

Look at how K Health introduces itself on its homepage. The hero section centers on “AI-powered primary care.” The first thing the visitor learns is what the technology is, not what the product does for them. The product is leading with capability.

Now look at One Medical’s homepage. The hero section leads with “Exceptional primary care.” The booking action is prominent. The AI is doing significant work in the background, recommending providers, suggesting appointment times, routing to telehealth, but none of that is on the first screen. One Medical is leading with the outcome the patient came for.

This isn’t an aesthetic preference. Irrational Labs ran a controlled experiment with One Medical where new members in the experimental condition were given an immediate opportunity to book with a recommended provider, instead of landing on a general homepage. Bookings increased 20%. Same product, same AI, same providers, different first screen, different outcome.

Nuance DAX takes this principle even further. DAX is an ambient documentation tool that uses AI to listen to doctor-patient conversations and generate clinical notes afterward. The clinician never has to interact with the AI directly. There’s no prompt to write. There’s no interface to learn. The AI is invisible to the user, embedded into a workflow the clinician already has. Stanford Health Care reported that 96% of physicians in their pilot found it easy to use. The “trust” question never came up because the product never asked the clinician to evaluate the AI — it just made their existing work easier.

What a 22-million-consultation audit revealed

The platform I audited was one of the most sophisticated healthcare AI products I’ve seen. It had processed 22 million consultations. It was backed by a major venture firm. Its clinical validation was published in peer-reviewed journals. Its models were objectively excellent.

My audit identified 12 critical UX issues. I expected the issues to cluster around edge cases or complex workflows. They didn’t. Nine of the 12 affected the user’s first 60 seconds.

The most consequential finding was about trust signal placement. The platform’s physician endorsements, clinical validation data, and outcome transparency metrics were positioned an average of 3.2 navigation steps from the entry screen. The sensitive data collection (symptoms, medical history, insurance details) occurred within the first interaction step. A patient was being asked to share some of their most personal information before the product had demonstrated any reason to trust it.

This inverts every principle of trust formation we have from Mayer, Davis and Schoorman’s foundational model. Trust builds through reciprocity. You give something before you ask for something. The platform was asking for health data from people who had seen nothing but a form field and a loading spinner.

I kept coming back to that physician who closed the tab in eight seconds. I wondered what she’d seen. I went back and looked at the screen she’d been staring at. It was a dashboard. It had 14 navigation options. None of them were pre-configured for her specialty, her patient population, or her workflow. The AI’s capability was advertised across the top in a banner. The trust signals, the ones that might have made her stay, were two clicks down.

She didn’t leave because she was AI-skeptical. She left because the interface told her, in the space of eight seconds, that this tool had been designed for someone who wasn’t her.

What happened when we stopped leading with capability

The other side of this story is a project I worked on called Rise Health, a patient-facing booking platform. When I joined, the product was struggling to convert visitors into completed bookings. The team’s instinct was to improve the matching algorithm, the AI component that paired patients with specialists based on their symptoms. The matching was actually fine. The conversion problem wasn’t in the model.

It was in the first 10 seconds of the homepage.

The original homepage led with a description of the AI: “Our advanced algorithm analyzes your symptoms and matches you with the right specialist based on clinical guidelines, availability, and insurance coverage.” It was accurate. It was comprehensive. It was completely backwards.

No patient arrives at a healthcare booking site wanting to understand an algorithm. They arrive wanting relief. They want to know whether this thing can get them in front of a doctor who can help. They want to know when. They want to know how.

We changed one sentence. We replaced the algorithm description with “Get matched with a specialist in under 2 minutes.” We didn’t touch the backend. We didn’t retrain any models. We didn’t change what the product did. We changed what the product said it did.

Bookings increased 6x over the following 8 weeks. Intake form abandonment dropped 29%. The same AI, performing the same way, wrapped in language that led with outcomes instead of capability.

I’ve told this story to other designers and the response is always the same: “That’s just copywriting.” And it is, partly. But I think dismissing it as copywriting misses what actually happened. The old homepage was optimized to explain the AI to the product team. The new homepage was optimized to answer the user’s question. Those are two entirely different design problems, and the industry mostly works on the first one while pretending it’s working on the second.

I got the first version wrong

I should be honest about something. The first redesign I proposed for Rise Health wasn’t the one that worked. My initial instinct was to add more trust signals, physician credentials, clinical validation badges, testimonials, press logos. I wanted to bury the user in proof.

It made the conversion worse.

What I missed is that trust signals only work after the user has decided the product might be relevant to them. If you haven’t answered “can this help me,” nothing you say about how trustworthy you are matters. The trust signals were an answer to a question the user hadn’t asked yet. I had to pull most of them off the first screen and put them where they’d be encountered after the user had already committed to the flow.

This is the part of the work I keep failing to communicate to other practitioners. Healthcare AI isn’t a special domain requiring specialized trust signals. It’s a domain where the basic principles of first-session design apply with higher stakes. The trust signals matter, but only in their right place in the sequence. The first question every first-time user is asking — in any product, AI or otherwise — is “is this for me?” Everything else comes after.

What this means for anyone shipping AI in high-stakes domains

I want to be careful not to turn this into a list of tips, because that’s not really what I’m arguing. What I’m arguing is that the framing of “healthcare AI has a trust problem” is leading the industry to the wrong solutions. We’re investing billions in making models more trustworthy when the actual trust failures are happening before the model ever runs.

If you’re designing an AI product right now, especially in a regulated domain, I’d push back on two assumptions that I’ve seen derail projects repeatedly.

The first assumption is that model quality is the bottleneck. It usually isn’t. The bottleneck is whether a skeptical user can figure out, in the space of their first session, whether this thing is worth their attention. The models are already good enough. The interfaces around them aren’t.

The second assumption is that trust is built through disclosure. The industry has embraced a kind of radical transparency, long explanations, confidence scores, detailed methodology, links to clinical studies. All of this is well-intentioned. Most of it is in the wrong place. Trust isn’t built through disclosure. Trust is built through reciprocity, through proving value before requesting investment, through showing the user you understand what they came for.

The physician who closed the tab in eight seconds wasn’t a trust failure. She was a reciprocity failure. The product wanted her data before she’d seen what the product could do. She did what any rational person would do: she left.

I keep coming back to that eight seconds because it contains, in miniature, everything the industry is getting wrong. We spent years optimizing the model. She decided in eight seconds that the model didn’t matter, because she never got to it.

That’s the gap. And it’s not about the AI.

Sibanu Bora is a Senior Product Designer specializing in AI and healthcare products. His work focuses on first-session design, the moments that determine whether users trust a product enough to use it. Portfolio

The trust gap in healthcare AI isn’t about the AI was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.