How consumer security products turn physical presence into assumed consent

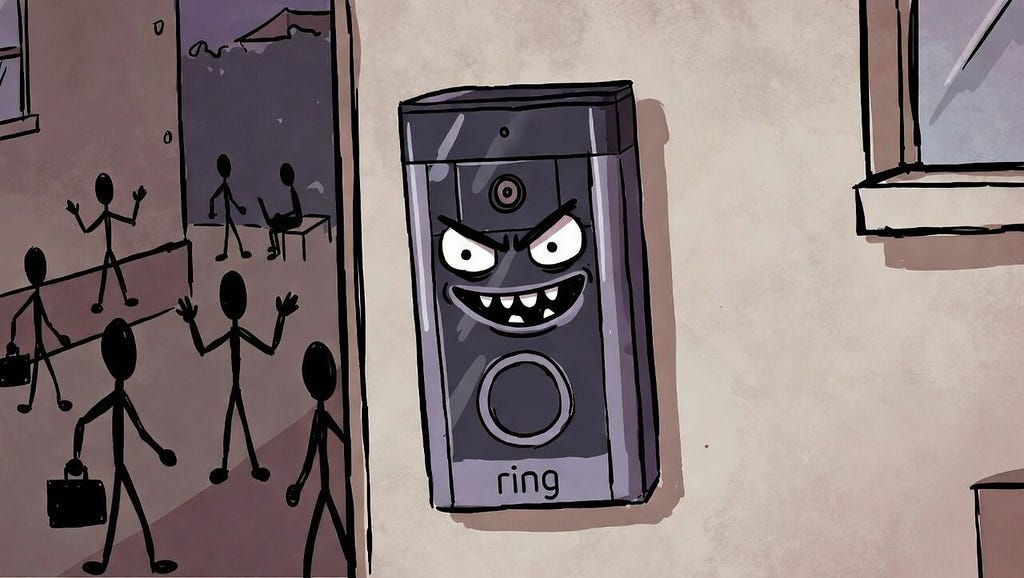

When Amazon’s Ring aired its Search Party advertisement during the Super Bowl, it presented a reassuring narrative: neighbours’ cameras cooperate, a missing dog is found, and communal order is restored. The unease the advert provoked did not stem from its goal. It arose from its premise, namely that individuals can be enrolled into a neighbourhood-scale, real-time AI surveillance network without ever explicitly consenting to participate.

Within days, Ring terminated its planned partnership with Flock Safety, a company criticised for operating large-scale automated licence plate recognition systems used by US law enforcement. Ring cited implementation complexity. The timing suggests a more candid explanation. The partnership made visible a widening gap between what surveillance technologies enable and what the public is prepared to tolerate.

This episode illustrates a defining feature of contemporary AI deployment. Individuals are no longer incorporated primarily as deliberate users; they are absorbed as ambient data contributors. Consent is not requested. It is inferred from physical presence. And when the implications of that inference become politically salient, withdrawal tends to be tactical rather than principled.

When presence becomes participation

Ring’s Search Party feature queries nearby cameras when a missing pet is reported. As Senator Ed Markey observed, this closely resembles neighbourhood-scale surveillance infrastructure. Crucially, Search Party does not operate in isolation. Ring’s Familiar Faces feature applies facial recognition to anyone passing within camera range, continuously scanning and categorising faces without their explicit knowledge or agreement.

Combined, these capabilities enable tracking across residential space through distributed, privately owned sensors. The system no longer merely secures private property. It facilitates continuous monitoring of public movement.

The central tension lies in default inclusion. Neighbours are not asked whether their footage should be analysed for AI searches. Passers-by are not consulted before their biometric features are processed. Cameras function as environmental infrastructure, and physical presence alone becomes sufficient grounds for enrolment.

This is not informed consent. It is participation assumed through exposure.

Yuval Noah Harari captures this historical inversion precisely in Nexus:

“In a world where humans monitored humans, privacy was the default.”

In sensor-saturated environments, the opposite is now true.

The normalisation of suspicion

The problem is not only technical but social. Platforms such as Ring’s Neighbours app have reformatted the neighbourhood watch into what critics describe as participatory mass surveillance. Real-time alerts about “suspicious activity” gamify vigilance, rewarding users who flag anomalies in their surroundings.

The consequences are not evenly distributed. Research consistently shows that people of colour are disproportionately labelled as suspicious on these platforms. What begins as a private perception becomes a data point: timestamped, geotagged, and potentially routed toward police response. The technology does not create implicit bias, but it institutionalises it, converting individual prejudice into operational logic.

When private cameras become the mechanism by which those biases trigger formal intervention, the question of who gets watched, and who gets to watch, becomes a civil rights issue rather than a consumer preference.

The Infrastructure of Inevitable Surveillance

The abandoned Flock Safety partnership reveals how such systems scale institutionally. The proposed integration could have allowed police departments to request Ring footage through Flock’s platform, following a familiar trajectory in which consumer products evolve into mechanisms for data aggregation and institutional access.

This pipeline matters beyond the cancelled partnership. Where law enforcement lacks the legal grounds for neighbourhood-wide warrants, access to aggregated community camera data can serve a functionally equivalent role. Even when platforms restrict direct police access, data frequently travels through intermediaries whose business models involve selling location and behavioural data to government agencies operating well outside local democratic oversight.

Cancelling one visible integration does not seal the pipeline. It merely reroutes it.

Ring’s transformation from consumer hardware to data-centric platform makes this structural rather than accidental. When one person installs a camera, they consent on their own behalf. That camera nonetheless captures neighbours, delivery workers, and passers-by. As camera density increases, opting out becomes practically impossible, since avoidance requires withdrawal from shared space altogether. At that point, consent functions largely as theatre.

Harari notes that in 2023 more than one billion CCTV cameras were operative globally, roughly one for every eight people. Surveillance is no longer exceptional. It is infrastructural.

The security paradox

There is also a pragmatic argument against centralised surveillance systems that receives less attention than it deserves: they are extraordinarily attractive targets for attack.

Aggregating millions of home cameras into a unified platform creates not only a privacy risk but a security one. A successful breach of a centralised AI hub would grant attackers what security researchers call god-view access: real-time visibility into private residences across entire cities. Footage intended to protect households becomes the means by which those households are exposed.

Ring itself experienced security breaches in its early years, including incidents in which attackers accessed cameras and harassed residents. Those were isolated failures. The risk posed by fully centralised systems, in which aggregation, AI analysis, and institutional access converge, is categorically larger.

Security researchers have long argued that local storage, where footage remains within the home rather than uploaded to corporate servers, is the only architecture that eliminates this class of risk entirely. The dominance of cloud-based models reflects economic incentives tied to data extraction, not technical necessity.

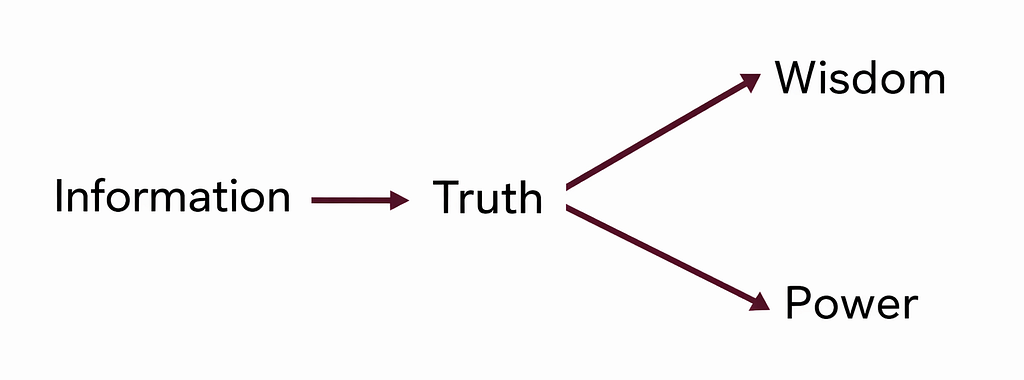

Beyond naive data optimism

Harari describes the naive view of information as the belief that more data naturally yields better outcomes, while ignoring how power and incentives shape its use. Ring’s AI features exemplify this assumption. Expanded footage access and continuous biometric analysis are framed as unambiguous improvements to safety, while questions of agency, governance, and asymmetrical control receive minimal attention.

The proposed Flock integration made this logic explicit. Convenience justified infrastructure. Infrastructure produced data. Data became the substrate for AI analysis and policing. The progression reflects economic alignment rather than malicious intent, but the outcome is the same regardless.

Making consent meaningful again

The challenge is not whether AI should exist in sensor-rich environments. It is how legitimacy is established in spaces that are collectively experienced.

Three governance approaches already exist and could be implemented without delay.

First, default opt-in rather than opt-out. AI surveillance features should require active, informed activation. Behavioural research shows that most users never alter default settings. Reversing this would not restrict the technology; it would make participation genuinely voluntary.

Second, community-level governance. Municipal oversight bodies can determine which capabilities are permissible within neighbourhoods, under what conditions, and with what limits on data retention. Legally constituted community trusts could require approval and independent auditing for third-party and law-enforcement partnerships.

Third, privacy-preserving technical architectures. Federated learning allows AI models to operate locally on devices without centralising raw data. A neighbourhood system built this way could detect unusual activity without footage ever leaving individual homes. These methods are already deployed in healthcare and finance. Their absence from consumer surveillance products reflects incentive structures, not technical limits.

From retreat to redesign

Ring’s retreat from the Flock Safety partnership does not resolve the underlying problem. The cameras remain installed. The AI capabilities persist. Partnerships can be quietly reconfigured through different intermediaries. The infrastructure endures.

This pattern extends far beyond Ring. Smart-city CCTV, mobile location tracking, and digital health monitoring follow the same trajectory. Presence becomes participation. Consent becomes assumed. Public concern produces episodic retreats, not structural change. The systems remain intact.

The question, then, is not whether AI inevitably erodes human agency. It is whether we continue to permit systems to treat mere presence as default input rather than as a condition requiring collective authorisation. In sensor-saturated environments, individual consent loses its protective force. When biometric processing is continuous, public anonymity erodes invisibly. When surveillance infrastructure is centralised, a single breach can undo every promise of safety on which the system was sold.

Crucially, these systems do not meaningfully self-correct through public discomfort alone. Left unconstrained, data-driven infrastructures expand until legitimacy breaks, at which point withdrawal becomes reputational rather than corrective. Ring’s retreat is best understood not as a resolution, but as a pressure release.

If AI is to be deployed in the name of safety, it must be governed with mechanisms equal to its reach: transparency that is operational rather than rhetorical, collective decision-making rather than individualised settings, and enforceable limits that bind platforms as tightly as they bind users. What is required are structures that prevent legitimacy crises from accumulating in the first place, rather than relying on backlash to correct them after the fact.

In environments where visibility has become the default, the task is not to abandon AI. It is to redesign it so that consent becomes meaningful again: deliberate rather than incidental, collective rather than individual, enforceable rather than assumed.

Andrea Filiberto Lucas is a Research Support Officer and MSc student in Artificial Intelligence at the University of Malta. He graduated summa cum laude with a BSc (Hons.) in IT (AI) and has published an IEEE peer-reviewed paper, with interests in Computer Vision and Applied AI.

Surveillance by default, consent by assumption was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.