Building intuitive AI products requires designing for context management

Large language models are becoming remarkably good at executing tasks.

They can summarize documents, generate content, analyze data, and even reason through complex problems in ways that feel almost human. In many cases, they outperform what most people could do on their own.

And yet, they still get things terribly wrong. Not just in obvious ways, such as hallucinations or incorrect interpretations of instructions. But in more obscure ways, answers that feel right on the surface, but miss something essential underneath. Where there is a confident-sounding answer that is correct, but far from the most valuable answer possible.

It’s easy to assume this is a limitation of intelligence. That the models just aren’t “smart enough” yet. But that’s not quite right. The problem isn’t intelligence. It’s context. These systems will give you the best answer they can, packaged in a way that sounds very convincing. But without explicit direction, they cannot know whether they had the right information to begin with.

The Real Problem: Context, Not Capability

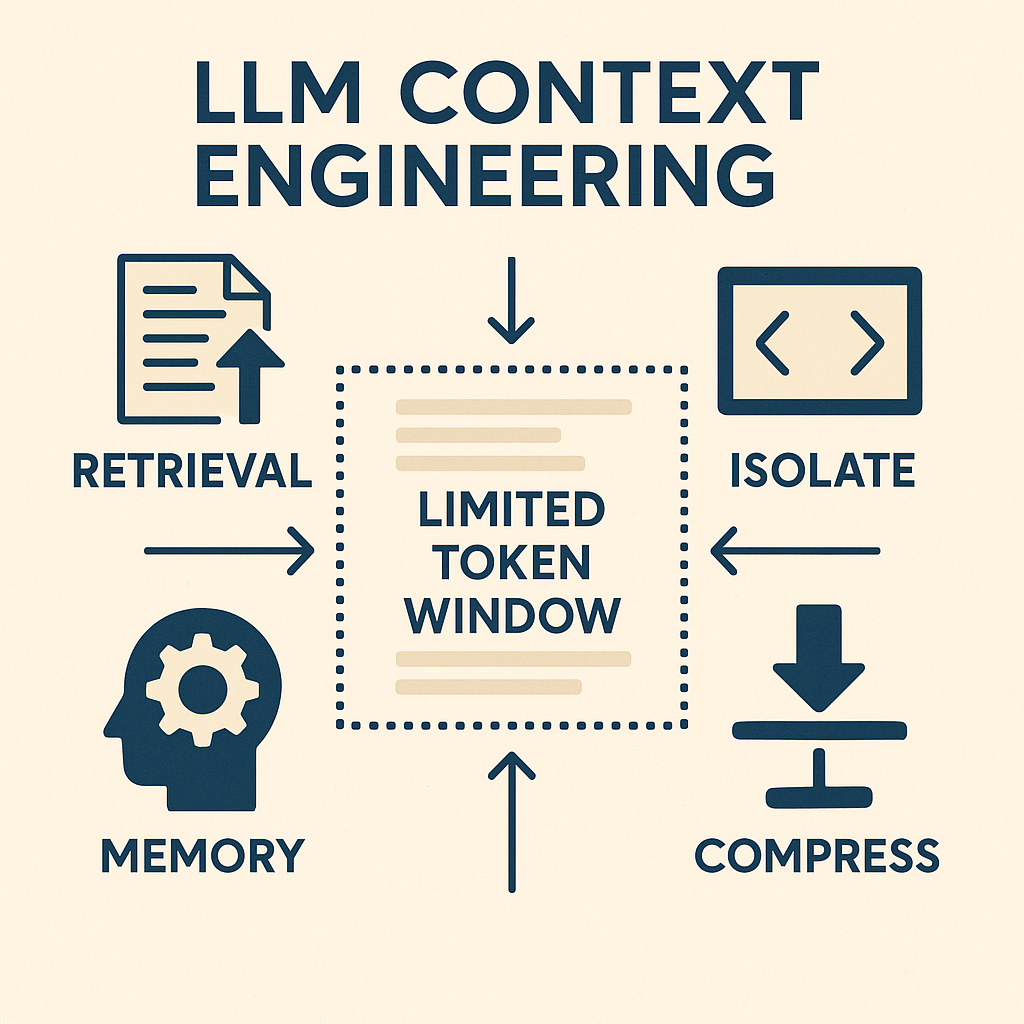

When we turn a large language model on a problem, we are defining the boundaries of what it can see by the data we have connected to it and the specific history we have shared. We determine what gets included. What gets left out. What gets emphasized. What gets ignored. The model operates entirely within that frame.

Which means it can produce a highly coherent, well-reasoned answer… to the wrong version of the problem. This is where things start to break. Because context is not just data. It’s the full shape of a situation, the history, the people, the constraints… the definition of “good.”

Most of this is not obvious. Much of it is not written down. Some of it changes over time. And yet, all of it influences what the “right” answer should be. Without that, the system fills in the gaps. It makes reasonable assumptions. It leans on patterns. Sometimes those assumptions are correct. Often, they’re just plausible.

And that’s the danger. A model doesn’t know when it’s missing something important. It just keeps going. Producing outputs that are technically sound, but directionally off. Answers that feel complete, but are grounded in an incomplete understanding of the situation.

But even when the relevant context is brought in, another problem quickly emerges. There is simply too much of it.

We’ve long understood that humans struggle with choice overload. When faced with too many options, we don’t evaluate everything. We simplify. We rely on what is available, recent, or easy to process. We choose what feels right, not necessarily what is most correct.

The same dynamic now applies to how context is selected by LLMs. These systems cannot meaningfully reason over all possible information. They generally settle on a set of assumptions and build from there. And we have minimal explainability of why they choose those facts, at least no more than asking a human the same question.

In many ways, these systems fall into the same pattern we do. They operate on a subset of what exists and make up things to fill gaps. The difference is that humans can step back and question their frame, relying on their intuition. We can ask what might be missing. We can reconsider what context truly matters. Then we can bring that thinking back to the system, ensuring it is operating in the most effective way possible.

From promoting to designing context

We are moving from a world of prompt design to a world of context design. This is a subtle shift, but an important one. Prompting is about phrasing. Context design is about the history and insight that is included in that phrasing. It’s the difference between asking a better question and ensuring the system understands the right version of the problem.

Mehul Gupta)

Designing for context means thinking upstream: What information should be brought in? How do different pieces relate to each other? What should stay persistent over time?

It requires moving beyond one-off interactions and toward something more structured. Most meaningful work doesn’t happen in isolation. It builds over time. And yet, many of the ways we interact with these systems still treat each exchange as if it exists on its own.

Designing for context is about preventing that reset. It’s about creating continuity. And ensuring that the system is operating with an evolving understanding of the problem space. This requires a new way of designing products, offering ways to manage the systems contextual intelligence.

The Rise of Context Management Design Patterns

We’re already starting to see products evolve in response to the shift toward context management. This is not happening as a set of incremental improvements, but as a fundamentally different way of structuring interaction. All the major AI players are building similar patterns into their core chat functionality, signaling a broader shift in how these systems are designed.

These are not just features layered on top of existing chat systems. They represent a new category of tools for managing context, capabilities that allow information to be gathered, structured, and reused over time. As a result, conversations are no longer isolated exchanges, but are grounded in a persistent and evolving set of inputs that shape how the system responds.

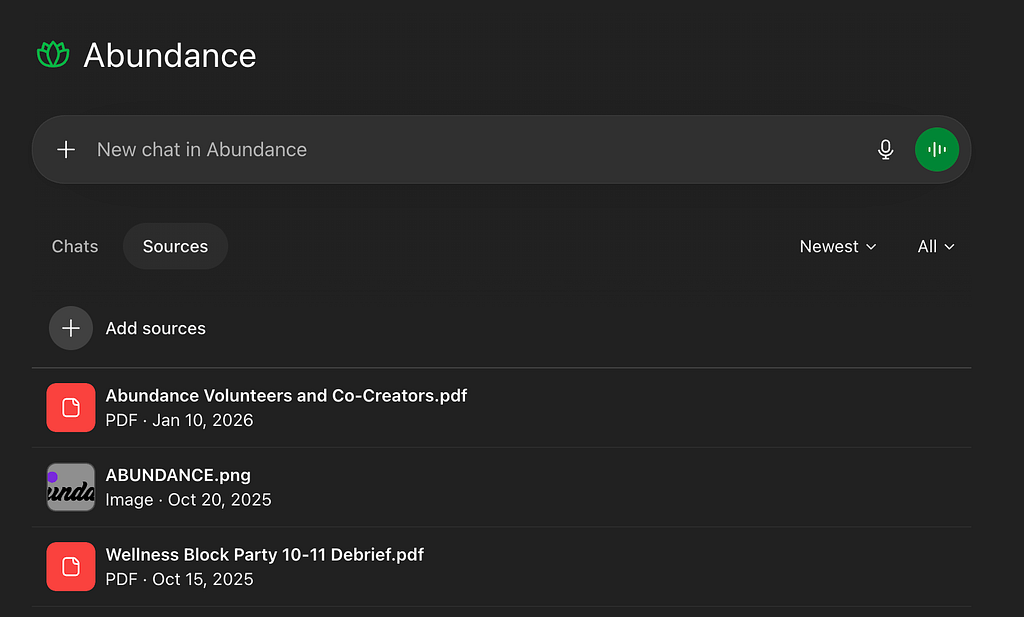

At the highest level, we see this in ChatGPT Projects, Claude Projects, Gemini NotebookLM, and Copilot Notebooks. All of these are examples of the first pattern: context containers. These features ensure that context is no longer something that exists only within a single interaction, but instead is stored, scoped, and reused across time. This allows understanding to accumulate rather than reset with each new prompt, fundamentally changing how users engage with these systems.

While each of these solutions offers its own unique features and capabilities, they all point to the same underlying realization. Users need a place to store relevant content and context in a way that can be easily accessed and reused. This marks a clear move away from one-off exchanges and toward environments designed for thinking, where documents, notes, conversations, and artifacts live together and shape how the model interprets each new request.

As systems begin to connect to more sources of context, a new challenge emerges. Not all available context should be used in every interaction, and including everything can quickly degrade the quality of the output. This need introduces the second emerging pattern: selective referencing. More context is not inherently better, targeted context is better. Including everything introduces noise and makes it harder for the system to identify what actually matters. Designing for context requires making intentional decisions about what should be included, and just as importantly, what should be excluded.

We can already see this pattern being implemented in different ways. In NotebookLM, for example, users can select specific sources through simple interface controls like checkboxes, allowing them to narrow the system’s focus. In broader chat interfaces, this idea is expanding as systems gain access to more external sources. Claude refers to these as connectors, giving users the ability to selectively ground their conversations in only the information they choose to include.

Beyond context containers and selective referencing, a third pattern is emerging: instructions. This pattern appears across both general chat and context-specific environments, allowing users to provide additional guidance about how the system should behave. Instructions help shape not just what the system considers, but how it interprets and responds to that information.

In general chat environments, instructions allow users to define preferences such as tone, style, and behavior. As OpenAI frames it, this gives users the ability to guide how the system responds across interactions. Within context containers, however, instructions become more specific and more powerful, because they are applied within a narrower and more defined scope. Claude Projects, for example, enables users to define this level of specificity through its instruction interface, helping shape how the system operates within that context. This creates a more aligned and intentional interaction, where the system is not just informed by content, but guided by user-defined expectations.

All three of these emerging patterns highlight the ways that companies are thinking about how to allow users to manage context. They point towards the realization that context management is a new high-valuable need for users as they interact with LLMs. The final aspect of this is the reality that context is not static. It changes as new information becomes available. Documents get updated. Decisions shift. Priorities move. Effective systems will need to account for this. They allow context to be updated, refined, and restructured over time, rather than treated as a fixed input. Together, these patterns point toward a new design space. Not one focused on interaction alone, but on how information is gathered, structured, and maintained across time.

The New Craft

In a world where intelligence is increasingly abundant, the differentiator is no longer the ability to produce answers. Instead, it is the ability to shape the conditions under which those answers are created. This means deciding what matters, recognizing what is missing, and structuring information in a way that leads to better outcomes.

We are watching the emergence of a new form of taste, but not in the aesthetic sense. This is contextual judgment, the ability to evaluate a complex and incomplete landscape and determine what deserves attention. As workers begin to orchestrate multiple AI systems and agents, each responsible for different tasks, this skill becomes increasingly important. The people who excel in this environment will not be the ones who simply know the most. They will be the ones who can consistently orient themselves and the systems they use toward what actually matters.

Thoughtful design is critical for building effective AI systems. It makes the invisible work of context selection more visible, bridging the gap between capability and effective use. Over time, this supports the development of stronger habits and mental models, enabling users to operate more effectively within increasingly complex systems.

Design is in the driver’s seat. It has the opportunity to shape technology that not only meets the demands of the moment, but also helps users grow alongside it. In doing so, it can ensure that as these systems become more powerful, the people using them become more effective as well.

Context matters… A lot was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.