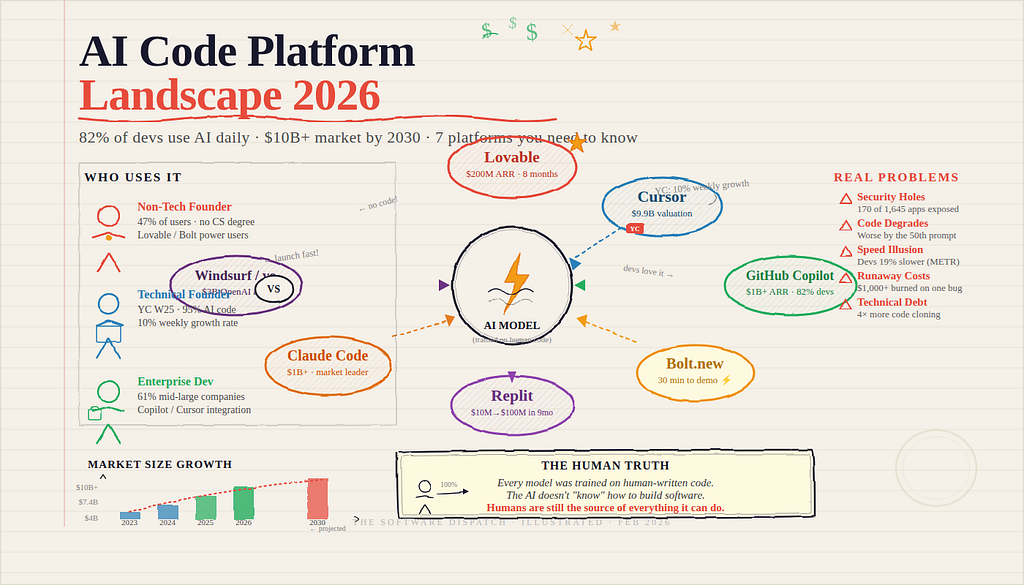

How Lovable, Cursor, and Bolt are rewriting who gets to build software — and the hidden costs nobody is talking about.

A quarter of all YC startups now ship code that’s 95% AI-written. And somewhere in Silicon Valley, a 22-year-old with no CS degree just launched an app used by 40,000 people — without writing a single line. Here’s the full, unfiltered story of who’s building the future, what’s working, what’s failing, and what it all means.

The vibe coding uprising

In February 2025, AI pioneer Andrej Karpathy coined a phrase that sent shockwaves through software communities worldwide: “vibe coding.” He described it as “fully giving in to the vibes, embracing exponentials, and forgetting that the code even exists.” At the time, many professional developers rolled their eyes. Six months later, they were using it themselves.

The term captured something genuinely unprecedented: a new generation of tools that let anyone — designers, marketers, founders, students — describe an app in plain English and watch it get built in real time. No compiler knowledge. No debugging in terminals. No Stack Overflow. Just a conversation with a machine that builds things.

What happened next defied almost every prediction. Lovable, a Stockholm-based startup launched in early 2024, reached $100M in annual recurring revenue in under 8 months — one of the fastest ARR trajectories ever recorded in enterprise software history. (Source: CB Insights)

Replit went from $10M to $100M ARR in 9 months after launching its AI Agent. Cursor’s parent company Anysphere crossed a $9.9 billion valuation by June 2025. The AI coding platform market was valued at $7.37 billion in 2025 alone, on track to hit nearly $24 billion by 2030.

Timeline of the explosion

(Source: Y Combinator Blog)

Feb 2025 — Karpathy invents “vibe coding” The term goes viral. Non-technical founders start flooding AI builder platforms. YC CEO Garry Tan calls it “the dominant way to code.”

March 2025 — YC drops its bombshell 25% of Winter 2025 YC startups reveal 95% of their codebase is AI-generated — by highly technical founders who could have coded it themselves. The industry gasps.

April 2025 — Guardio Labs sounds the alarm Security researchers find critical “VibeScamming” vulnerability: malicious prompt injection can trick AI builders into generating backdoors. 170 of 1,645 Lovable-created apps are found to have security vulnerabilities exposing user data.

May 2025 — OpenAI acquires Windsurf for $3B The biggest signal yet that Big Tech is treating AI coding as a core battleground. Microsoft, Google, Amazon, and IBM all accelerate competing products.

July 2025 — The METR study shocks developers A rigorous independent study finds experienced developers using AI tools took 19% longer to complete complex tasks — despite believing they were 20% faster. The perception-reality gap is stark.

Dec 2025 — The $4B market crystallizes CB Insights confirms three players — GitHub Copilot, Claude Code, and Anysphere — now hold 70%+ of the coding AI agent market. All have crossed $1B ARR.

Meet the platforms: A field guide

There are now over 130 active players in the AI coding platform space. But the real action clusters around a handful of names that keep appearing in developer Slack channels, Reddit threads, and YC pitch decks. Here is what they actually are, who built them, and what their real numbers look like:

Lovable — No-code king

Stockholm-born. Built for non-technical founders. Describe your app in plain English; Lovable builds a full-stack React + Supabase app with shareable URL, GitHub sync, and one-click deploy. The go-to for solopreneurs validating startup ideas. The fastest-growing platform in the space.

$200M ARR — targeting $1B by summer 2026

Cursor — Dev power tool

Made by Anysphere. VS Code-based AI code editor — the choice for professional developers. Supports Claude, GPT-4, and other models. Produces production-grade code with proper architecture. Required technical knowledge but unmatched depth. Valued at $9.9B.

$500M+ ARR — 2 years to get there

Bolt.new — Speed demon

Browser-based. Zero setup. Type what you want, get a shareable URL in under 30 minutes — the fastest time-to-demo of any platform. Built on StackBlitz. Powered by Supabase backend. Best for hackathon prototypes and quick experiments, but code quality degrades over iterations.

Fast-growing — $100M+ ARR threshold crossed

Replit — Full environment

The cloud IDE with an embedded AI Agent. Educator-favored. Strong for collaborative team environments. Built-in databases, deployment, and real-time multiplayer coding. Most powerful “middle ground” platform — too complex for pure beginners but excellent for learning developers and startup teams.

$10M → $100M ARR — in under 9 months

GitHub Copilot — Enterprise standard

Microsoft’s flagship. Integrates into VS Code, JetBrains, and more. The largest user base of any AI coding tool. 82% of developers surveyed use it — the default entry point for enterprise teams. Code completion and inline suggestions rather than full app generation. $10–39/month.

$1B+ ARR — market leader by user count

Claude Code — Terminal agent

Anthropic’s command-line AI engineer. Deep code understanding, multi-file reasoning, and agentic task execution. CB Insights named it the “runaway market leader” among LLM providers for pure coding use cases. For developers who want maximum intelligence with full control. Crossed $1B ARR.

$1B+ ARR — top 3 market position

Windsurf — Acquired by OpenAI

Formerly Codeium. IDE-based with deep code understanding and agentic “flow” mode. Highest code quality scores in independent benchmarks (8.5/10). Acquired by OpenAI for $3 billion in May 2025 — the biggest statement yet about where AI coding is heading.

$3B acquisition — by OpenAI · May 2025

v0 by Vercel — UI specialist

Vercel’s AI-powered UI generator. Highest quality score in benchmarks (9/10). Specializes in component and UI generation using React + shadcn/ui. Not a full app builder but the best tool if you want beautiful, production-quality front-end components fast. Free tier available.

Free + pro tiers — part of Vercel ecosystem

“A year ago, they would have built their product from scratch.

But now 95% of it is built by an AI. You don’t need a team of 50

engineers anymore. Companies are reaching $10 million in revenue with

teams of less than 10.” – Garry Tan, CEO, Y Combinator, March 2025

Who is actually using these tools?

The data paints a picture that surprises most people. This isn’t just developers upgrading their workflow. The user base of AI coding platforms is fundamentally different from what the software world has ever seen before.

Three distinct tribes are using these tools, each for very different reasons:

Tribe 1: The non-technical founder

The biggest story of the AI coding era isn’t that developers got faster — it’s that the definition of “developer” has exploded. 47% of people now applying AI to coding do so for work or school, and 41% use it for personal projects, according to Menlo Ventures’ 2025 State of Consumer AI report. These are designers, product managers, marketers, and domain experts who previously needed to hire engineers to build their ideas. With Lovable or Bolt, they don’t anymore.

Gartner predicts 70% of new applications will be built outside traditional IT departments by end of 2025. That is happening. Real estate agents are building property management tools. Teachers are building grading apps. Small business owners are building custom CRMs. The phrase you see repeated constantly in community forums:

“Lovable figured out what to build when I couldn’t.”

Tribe 2: The technical Startup Founder

This is the group that surprised everyone — including YC. These aren’t non-technical people using AI because they have no choice. They’re highly skilled engineers using AI because it’s dramatically faster. The YC Winter 2025 data is stunning: 25% of startups with 95% AI-generated code were fully capable of writing that code manually. They chose not to. This cohort grew at 10% per week collectively — the fastest-growing YC batch ever recorded. Companies in this wave are reaching $10M revenue with teams of fewer than 10 people.

Tribe 3: The enterprise developer

The 63% of the market held by large enterprises is quieter but massive. GitHub Copilot integration into existing IDE workflows. Amazon CodeWhisperer for AWS shops. Internal deployment of fine-tuned models for regulated industries. These teams aren’t vibe coding — they’re using AI for code review, documentation, test generation, and completing specific functions. 61% of mid-to-large U.S. software enterprises have integrated AI pair-programming into their CI/CD pipelines as of 2025.

Sector distribution — who uses it

- Saas products — 34%

- Fintech — 21%

- Healthtech — 15%

- E-commerce — 13%

- Other (Education / Government / Misc.) — 17%

The real problems nobody talks about

Every platform announcement shows beautiful demos. Every founder testimonial is glowing. But spend enough time in the trenches — building 47 applications across these tools as one reviewer did — and a very different picture emerges. Here are the seven critical issues users face globally, backed by real research:

01

Security holes

A May 2025 study found 170 of 1,645 Lovable-built apps exposed personal user data. Guardio Labs discovered “VibeScamming” — attackers can inject malicious prompts that cause AI to generate backdoors. No platform auto-audits for security. Human review is non-negotiable for production apps.

02

Code degrades over time

Multiple independent studies confirm: the 50th prompt produces measurably worse code than the 5th. As projects grow, AI-generated code becomes inconsistent and difficult to maintain. The context window fills up, patterns break down, and the codebase becomes something “nobody fully understands.”

03

The perception gap

The July 2025 METR study stunned the industry: experienced developers using AI tools took 19% longer on complex tasks despite feeling 20% faster. The illusion of speed is real. Code appears fast — but debugging AI output, understanding what was generated, and fixing mistakes eats the time saved.

04

Runway token costs

Consumption-based pricing is wildly unpredictable during iterative debugging. Bolt.new users have burned 2+ million tokens fixing a single bug. Some spent over $1,000 on a single project. Replit Agent credit depletion during active iteration regularly shocks users. There’s no cost ceiling.

05

Compliance blind spots

For regulated industries — finance, healthcare, government — none of these platforms meet production compliance requirements. No SOC2. No HIPAA-ready default configurations. No audit trails. All platforms are explicitly prototyping tools. Trying to ship to production without human review in these sectors is genuinely dangerous.

06

Vendor lock-in

Bolt.new and Replit make migration painful. Your project becomes deeply tied to their infrastructure. v0 and Lovable are easiest to migrate away from. For startups choosing these platforms, the exit strategy is rarely considered upfront — and becomes a serious problem at scale.

07

The almost “ right “ frustration

Stack Overflow’s 2025 survey found that 66% of developers’ biggest AI frustration is “solutions that are almost right but not quite.” This near-miss problem is uniquely maddening: the AI produces something that looks correct, passes casual inspection, but breaks at the edges. Debugging this is a new cognitive skill entirely.

08

Technical debit explosion

AI-assisted coding leads to 4x more code cloning, increasing maintenance effort over time. Google’s 2024 DORA report found AI use caused a 7.2% drop in delivery stability. 62.4% of developers cite technical debt as their top frustration — and AI is accelerating its accumulation for teams without strong review practices.

09

No real production-ready code

Every benchmark study reaches the same conclusion: no AI coding platform produces code you can ship to production without significant manual finishing. The speed gains are genuine for prototypes. But the quality gap is equally real for anything that needs to last beyond a demo.

AI coding vs. Manual coding: The real comparison

The debate “AI vs. human coding” misframes the reality. The better question is: for what tasks, for what users, at what stage does AI coding deliver genuine value — and where does it create hidden costs?

Time to first prototype

AI Coding platforms

Applications can be generated in 28–45 minutes. These tools provide dramatic gains for non-technical founders and small teams seeking rapid validation.

Manual human coding

Initial builds typically take hours to days depending on architecture, complexity, and stack decisions.

Assessment: AI platforms provide a significant advantage in early-stage prototyping and rapid iteration.

Code quality (Simple applications)

AI coding platforms

Generally sufficient for MVPs and demos. Generated code is functional and often built on consistent starter stacks.

Manual human coding

Quality depends entirely on developer skill level. Strong engineers can produce clean, extensible foundations from the start.

Assessment: For straightforward applications, AI-generated code is often adequate.

Code quality (Complex systems)

AI coding platforms

Code quality degrades over time. As projects expand, inconsistencies increase, architectural patterns fragment, and maintainability becomes challenging.

Manual human coding

Architectural integrity is intentionally designed and maintained. Technical decisions are deliberate and aligned with long-term system goals.

Assessment: For complex or long-lived systems, manual engineering maintains structural coherence more effectively.

Security

AI coding platforms

Elevated vulnerability risk without manual auditing. Prompt injection risks and insecure defaults require active oversight.

Manual human coding

Security patterns are applied intentionally through training, experience, and established review processes.

Assessment: Production-grade security still requires human review regardless of AI assistance.

Speed (Senior developers)

AI coding platforms

Studies indicate experienced developers may take longer on complex tasks when using AI tools, despite a perception of increased speed.

Manual human coding

Expert engineers often move faster on nuanced architectural and system-level decisions.

Assessment: AI accelerates repetitive tasks but does not consistently outperform experienced developers on sophisticated work.

Speed (Junior developers and non-technical users)

AI coding platforms

Documented productivity gains of approximately 26% in certain studies. Enables non-coders to build functional applications.

Manual human coding

Requires months or years of training to achieve similar output.

Assessment: AI significantly lowers the barrier to entry.

Cost

AI coding platforms

Subscription pricing combined with unpredictable token consumption. Costs can spike significantly during debugging cycles.

Manual human coding

Fixed salary costs. High per-hour expense but predictable for planning purposes.

Assessment: AI lowers upfront cost but introduces variability.

Startup viability

AI coding platforms

Small teams can achieve meaningful revenue milestones with minimal engineering headcount. Rapid iteration supports aggressive growth experimentation.

Manual human coding

Requires larger teams to reach similar output velocity.

Assessment: AI creates leverage in early-stage startups.

Scalability

AI coding platforms

Production systems often require significant refactoring. AI-generated code rarely scales without human rework.

Manual human coding

Systems can be designed for scale from inception when architected by experienced engineers.

Assessment: Scalability depends heavily on human oversight.

Learning and understanding

AI coding platforms

Risk of using code that developers do not fully understand. May create “black box” dependencies.

Manual human coding

Deep understanding compounds over time. Knowledge of system components strengthens architectural decision-making.

Assessment: Long-term technical mastery requires active engagement beyond generated output.

AI coding platforms are powerful acceleration tools. They are highly effective for prototyping, validation, and enabling non-technical creators. However, long-term system stability, security, architectural integrity, and scalability still rely heavily on human engineering judgment.

The strategic question is not whether AI replaces developers. It is where AI meaningfully augments them — and where human expertise remains indispensable.

The verdict isn’t simple. “AI tools are genuinely transformative for prototyping, validation, and non-technical builders”. They’re genuinely problematic for production systems without human oversight. The “right” answer depends entirely on your position in the product lifecycle and your risk tolerance.

Where the money is: Market data

The total AI coding market is on a trajectory that analysts estimate will reach $99 billion by 2034. The combined equity funding raised by players in this space already exceeded $5.2 billion in 2025 alone — more than double the $2 billion raised the year before. Over 60 mergers, acquisitions, and partnerships occurred in the AI developer tools ecosystem in 2024–2025. This is not a niche software category anymore. It is a platform war (Source: CB Insights Market Map)

Geography matters too: North America holds 43% of the current market but Asia-Pacific is the fastest-growing region at a 27.4% CAGR. India, particularly, is seeing explosive growth in AI-assisted development across both enterprise adoption and startup formation. The IT and telecommunications sector leads usage at 29.4% of the market — but banking and financial services (BFSI) is the fastest-growing vertical at 28.13% CAGR.

The human truth at the core

Here is the thing that gets buried under all the revenue numbers and platform wars: every AI coding platform in existence was built by humans. Trained by humans. Fed data by humans. Evaluated by humans. Corrected by humans. The “intelligence” that pops out a React app from a text prompt is the crystallized output of millions of hours of human code written by developers over decades, scraped from GitHub repositories, Stack Overflow answers, documentation pages, and technical blogs.

This isn’t a criticism — it’s a clarification. The AI doesn’t “know” how to build software the way a senior engineer knows. It pattern-matches. It predicts what code should follow the tokens you gave it, based on what it saw in training.

That is why it fails in ways that feel eerily specific: it produces code that looks correct but has subtle logical errors. It follows conventions without understanding the reasoning behind them. It solves problems it’s seen before brilliantly; it stumbles on genuinely novel architectural challenges.

This is why the most dangerous users of AI coding platforms are the ones who trust them completely. The safest users are those who treat AI output the way a senior developer treats a junior developer’s pull request: read it carefully, understand it fully, question its assumptions, test its edge cases, and only then merge it.

71% of developers say they do not merge AI-generated code without manual review. That number should be 100%. The 29% who skip review are accumulating technical debt faster than they realize, in a codebase increasingly full of code whose logic they don’t fully understand.

The brilliant insight buried in the METR study is that AI doesn’t make developers faster across the board — it makes less-experienced developers faster, while potentially slowing down senior engineers on complex problems. This suggests the technology is doing something more interesting than pure acceleration: it is compressing the skill gap.

A junior developer with AI tools can produce work that once required mid-level experience. A non-technical founder can now build what once required a small engineering team. That democratization is real and meaningful. But it comes with the risk of deploying systems built without the depth of understanding that comes from having built them the hard way.

Are people actually launching real products?

Short answer: yes, but with important asterisks.

YC’s Winter 2025 data is the most compelling evidence. Companies in that cohort growing 10% per week with sub-10-person teams and genuine customers are not demos or experiments — they’re real businesses. YC CEO Garry Tan pointed to startups reaching $10M in revenue with fewer than 10 people. That would have been structurally impossible in 2019.

The common pattern in communities like Reddit and Indie Hackers follows a “graduate workflow”: prototype fast in Lovable or Bolt, validate with real users quickly, then either rebuild properly in Cursor or traditional development once the idea is proven — or hire an engineer to clean up the codebase for production. Start vibe, graduate to code.

Lovable projects $1 billion in ARR by summer 2026 — five times its current $200M run rate. That would make it one of the fastest-scaling B2C software products in history. Whether that reflects genuine product launches or churning users experimenting with prototypes is the key question. The honest answer: both. High-churn is a documented concern across all these platforms, precisely because the barrier to trying is so low that many users spin up projects they never finish.

The sectors where AI coding platforms are generating real-world product launches break down clearly: SaaS tools (34%), fintech applications (21%), healthtech prototypes (15%), and e-commerce storefronts (13%). The fintech and healthtech numbers are notable precisely because these are regulated industries where AI-generated code requires the most careful human review before shipping — and where the security vulnerabilities documented in 2025 represent the highest actual risk.

Final word

So what does it all mean?

We are in the middle of a genuine platform shift — one that happens once or twice per generation in software. The printing press didn’t replace writers; it made writing accessible to millions more people and changed what “writing” meant forever. The spreadsheet didn’t replace accountants; it changed what accountants spent their time doing. AI coding platforms are doing something similar to software development.

The question is not whether to use these tools. 82% of developers already do, daily. The question is whether you’re using them with eyes open — understanding what they actually are (pattern-matching engines trained on human knowledge), what they’re good at (speed, scaffolding, prototyping, compressing the skill gap), and what they’re bad at (security, novel architecture, complex production systems).

The real win

AI coding tools have genuinely democratized software creation. Millions of people who could not previously build software now can. That is a profound and real expansion of human capability — not hype.

The real risk

Production systems built with AI and not reviewed by experienced engineers are accumulating vulnerabilities, technical debt, and architectural problems that will surface at scale. The bill will come due.

The uncomfortable truth

AI doesn’t know what it doesn’t know. It generates plausible code, not correct code. The difference only becomes visible when the system fails under real-world conditions that the training data didn’t anticipate.

The bigger picture

Every model was built on human knowledge. AI is not a new form of intelligence — it’s the stored pattern of human intelligence, made instantly accessible. The humans who fed it that knowledge are still the source of everything it can do.

The most honest summary: AI coding platforms are the best prototyping tools ever built and potentially dangerous production deployment tools if used without human oversight. Use them to go from zero to validated idea in a weekend. Use experienced engineers to take it from there. The future belongs to builders who understand both sides of that line — and know precisely when they’re on the wrong one.

The code revolution isn’t coming. It’s here. The question is whether you’re building with it — or being swept along by it.

References:

[1] CB Insights — Coding AI market share december 2025 → https://www.cbinsights.com/research/report/coding-ai-market-share-december-2025/

[2] Stack overflow developer survey 2025 — AI section → https://survey.stackoverflow.co/2025/ai/

[3] METR — Measuring impact of early 2025 AI on developer productivity → https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

[4] Guardio labs — Vibescamming research → https://guard.io/labs/vibescamming-from-prompt-to-phish-benchmarking-popular-ai-agents-resistance-to-the-dark-side

[5] Y Combinator blog → https://www.ycombinator.com/blog/

[6] CB Insights — AI Software development market map → https://www.cbinsights.com/research/ai-software-development-market-map/

[7] Stack Overflow 2025 Survey — AI Tools → https://survey.stackoverflow.co/2025/ai/

[8] METR study academic paper → https://arxiv.org/abs/2507.09089

🎬 Must Watch: “The vibe coding mind virus explained” by Fireship →

https://medium.com/media/0f1740eb50c77f880c4fc6a6cabc032c/href

AI writes the code and humans still write the rules was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.