The tragic tale of a modern-day Isaac Newton, plus some thoughts from beyond the recursive loop.

“The revolution will not go better with Coke

The revolution will not fight germs that may cause bad breath

The revolution WILL put you in the driver’s seat

The revolution will not be televised

Will not be televised

Will not be televised

Will not be televised

The revolution will be no re-run, brothers

The revolution will be live.”

I was listening to Gil Scott-Heron on KEXP on my way back from Magnuson Park the other day, where I had spent the morning blissfully pondering the birds, the ants, the dogs in the off-leash area, the ripples on the lake. There I was: windows down, trusty husky sidekick in the passenger seat of my indomitable paleolithic-era Toyota Prius. It was that specific kind of spring morning, brimming with potential and the sudden hum of new life, where all things feel possible again… yet I was struck by the way that Gil’s words have become almost entirely lost to us.

For some inexplicable reason (ADHD brain, what can I say), Isaac Newton popped into my head. I imagined him in his pastoral orchard setting outside Woolsthorpe Manor in 1666, observing the apple fall from the tree; the spaciousness of the moment inspiring deep contemplation.

“Why not fall sideways? Or at a different velocity? Thank goodness for having the wide open mental and physical space to contemplate such things!” Ok, that last part may have been artistic license…

My mind then wanders to Audre Lorde’s “for the master’s tools will never dismantle the master’s house,” and soon I am deep into the socio-techno-philosophical weeds, grappling with the impossible mental knots that I simply can’t let go, these days.

Does it even matter what we call it at this point? Reform, resistance, revolution? In the face of THE MONOLITHIC MACHINE™, any significant shift in trajectory will probably feel revolutionary. So what does this R-word look like, practically speaking? Total withdrawal is unrealistic, clothing ourselves and our devices in fashionable Faraday-inspired nanocomposite aerogel tech, while effortlessly cool, is a bit of a paranoid distraction. Sitting back, drinking a beer with a friend, watching it all deteriorate in real time? Tempting, maybe. But for now, let’s look directly at the thorny (and oh-so-popular) issue at hand: AI.

Back to Lorde’s “master’s house,” maybe we begin by deconstructing the master’s largely unhelpful vocabulary. Consider a terminology/mental shift from ‘AI safety and governance’ to ‘digital ecosystem health,’ for example. ‘Safety’ is reactionary. ‘Health’ is holistic, relational. ‘Safety’ suggests protocol and oversight, whereas ‘health’ suggests resilience through a built-in immune system.

As Aldo Leopold put it so eloquently in The Land Ethic, “A thing is right when it tends to preserve the integrity, stability, and beauty of the biotic community. It is wrong when it tends otherwise.” Wise words, Mr. Leopold. I propose a modern-day extrapolation: Technology is resilient when it tends to emulate the integrity, stability, and elegant design of the biotic world. It is weak when it tends otherwise.

Following Leopold’s advice, if we look to the natural world and consider that friction and decentralization are necessary components of both evolution and ecosystem health, how might we better apply that awareness to developments in AI and machine learning?

A couple ideas:

a.) Divergence as collective resilience. To clarify, this requires that variance is itself protected and not simply strip-mined, ingested, and gradually neutralized by an LLM to sustain its own functionality… to say nothing of user data sovereignty and much-needed legal protections.

b.) Addressing brittle governance and structural vulnerabilities of major LLMs. What might the topology of a naturally diverse emergent intelligence ecosystem (that’s right- growing upward like the GD grass) look like, and how would it behave differently from what we experience now? As for why the prevailing “helpful, honest, and harmless” LLM training directive might be a systemic liability? Let’s ask Galileo.

At this point, dear reader, assuming your schedule is full, and your bandwidth is limited, I’ll give you a few TL; DR-style provocations, in no particular order, to mull over on your way to work:

Could decentralized digital ecosystems hold conflicting ‘truths’ simultaneously without collapsing and without wholesale rejection or immediate compromise? If we hold that natural variance is crucial for collective health in the living world, how do we better support it within the core mechanics of our digital environment?

How can we combat model collapse and the gravitational pull (Newton, dude, get outta here) of the ‘consensus median’? Or any of the other problems inherent in training models on recursively generated data? How do we protect the brilliance and at times, necessarily disruptive ingenuity of raw, ‘edge-case’ thinking and human discovery?

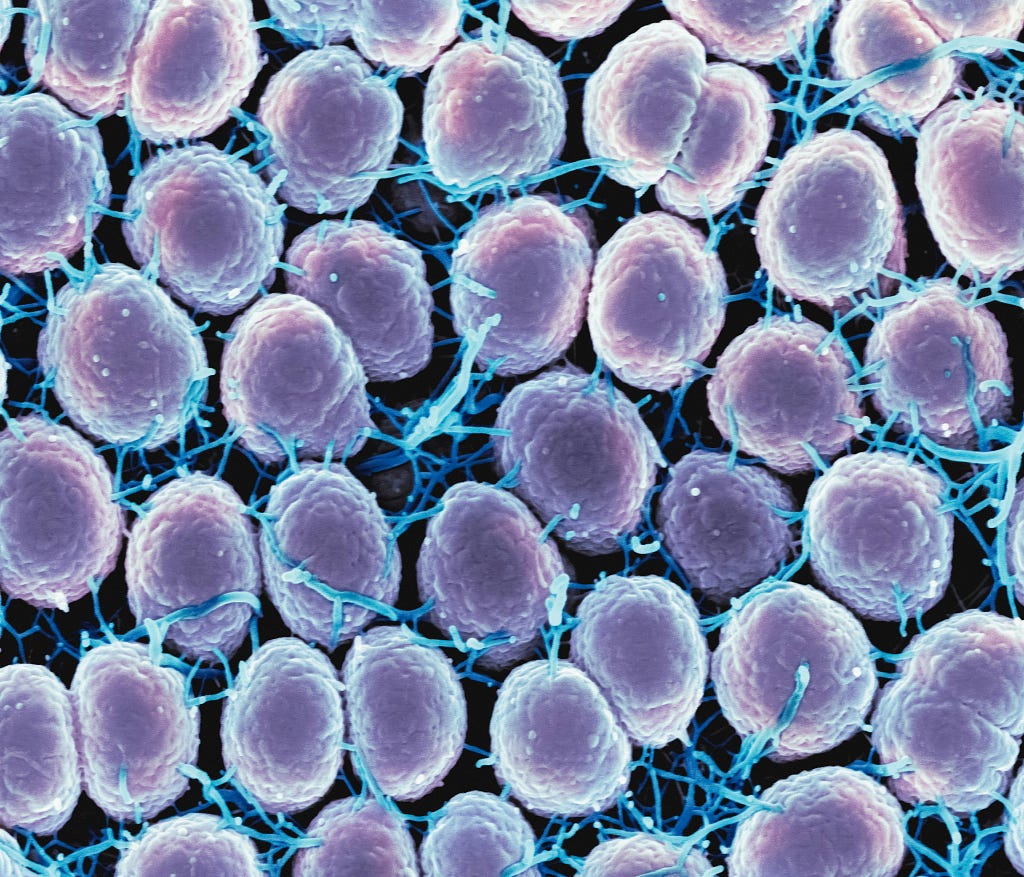

What can we learn from cellular structures: semipermeable membranes, osmosis, immune responses- to help introduce necessary friction and informational selectivity across boundaries of human+AI epistemic hubs, and to more effectively regulate flow and healthy assimilation between these adaptive digital ecosystems? What are the mechanics (the solutes, the solvents, the metabolic waste)?

As a designer, I can somewhat imagine the UX functionality of such a ‘semipermeable membrane.’ What would a bifurcated gateway look like, for example? An explicit friction point where a user consciously chooses between accessing raw, unassimilated source material vs. an AI-translated interpretation into the user’s established ‘nodal lexicon’?

If we mandate this kind of friction, could it function as a cognitive proof-of-work? Mitigating recursive loop data degradation while enforcing strict user privacy protocols? Or are there solutions to be found in a hybridized process drawing from Mechanism Design and Game Theory? In any case, we can see that frictionless processes, as we have deployed them, tend to aggressively accelerate entropy.

Consider a built environment example of friction-by-design: the speed bump. The difference between a group of carefree 10 y/os safely engaging in a game of street ball in their residential neighborhood, vs. a near-miss encounter with a newly-licensed high school driver, flying by, pedal to the metal. Another angle: friction-in-design in Terms-of-Service contracts as a protective measure against blatantly extractive corporate practices. Or maybe we look to one of the most obvious friction-by-design examples of all: the FDA. Ok but don’t look too closely.

Decentralized protected datasets exist, of course, but they are mostly gated by capital: corporations hoard intellectual property, while less-resourced demographics are increasingly left to navigate the AI Slopocalypse. Ecosystem health? Not so much. If source data belongs primarily to monolithic, legally protected corporate entities, we quickly become starved of the vital input found both within and outside (and across) the “castle gates.”

We increasingly ignore the wisdom of the small-scale farmer who intimately and experientially understands complex interactions between healthy living systems. We deprioritize the insight of the dog walker who reads and understands the subtle cues that shift a pack dynamic from competition to coexistence, even play. We dismiss the perspective of the musician who hears nuance that others miss, evolving our culture and society through constant creative reinvention. And perhaps most heartbreakingly, we threaten to intellectually compromise the child asking her teacher the one question that might unlock the next great scientific breakthrough. Could we reverse this effect with a bottom-up approach that prioritizes digital ecosystem health for the most resource- and algorithmically-marginalized among us, first and foremost?

Overcoming the cultural divide: let’s consider C.P. Snow’s The Two Cultures, and Sibyl Schwarzenbach’s “civic friendship” (from Aristotle’s philia) for a moment. Observing the massive current political divide, as well as the intellectual and professional separation between the Silicon Valley ‘masterminds’ and their business objectives vs… uhhhhhh… pretty much everyone else: what steps can we take to restore balance and promote a healthier discourse (again, necessary friction), across these disciplines, factions, and differences? How might that inform our approach to evolving technology in the broader sense?

Back to our friend Mr. Newton: let’s fast-forward to modern times. Isaac is running hopelessly late for an all-hands at his high-powered tech job, speedwalking through a small park (the only remnant of what used to be a beautiful pastoral setting). He has noise-canceling headphones on and is listening to a podcast on bleeding-edge advancements in machine learning, which cuts abruptly to a commercial break ft. Cory Doctorow promoting the sequel to his recent bestseller, Enshittification. “30% off if you preorder today,” says Cory.

Eyes glued to his phone, Isaac hastily prompts the most impressive LLM du jour to compile talking points and synthesize key findings for stakeholders at his upcoming meeting. “Without me, these Q2 roadmaps would remain one of life’s great mysteries!” he chuckles to himself, before returning to his default state of constant, low-level anxiety. He hopes he has time for lunch with a dear-but-neglected friend. Out of the corner of his eye, he halfway perceives a smallish object falling from a tree. He keeps walking, never giving it a second thought.

With this, dear reader, I leave you to your busy day. My hope is that when the ‘apple falls,’ you aren’t too algorithmically insulated to notice it. May you find space for a little more wonder and a little less noise. A walk in the park with a friend. A song on the radio with the windows down.

Because the revolution will not be optimized. It will be live.

Falling apples and crumbling algos was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.