A framework for Crisis Information Design.

People can no longer tell the difference between real images and AI-generated ones. That’s not an opinion. It’s a finding from researchers at UC Berkeley and SUNY Buffalo, who demonstrated that AI-synthesized faces are now perceived as more trustworthy than real human faces. Let that sink in for a second: the fake version of reality is more believable than reality itself.

Meanwhile, misinformation spreads six times faster than true information on social media. During a crisis, when stress and fear impair our ability to think critically, the information ecosystem becomes a minefield.

The platforms where people actually consume information during emergencies (WhatsApp, X, Facebook, Reddit) have zero verification infrastructure at the point of consumption. The tools that can verify content (Snopes, PolitiFact, C2PA, SynthID, Hive AI) exist on entirely separate platforms, accessed hours later, long after the damage is done.

This is a design problem. Not a policy problem. Not a content moderation problem. A design problem. And it’s one that the UX community has barely started to address.

This isn’t just theoretical. In February 2026, I watched it unfold in real time while sheltering in place during a crisis in Puerto Vallarta, Mexico. What I experienced that day revealed a set of systemic failures that I’ve spent the last few days trying to make sense of. This article is the result: a framework for designers who want to build information products that actually work when people need them most.

But first, some context. Because the problem didn’t start with AI. It has been escalating since the beginning of the internet.

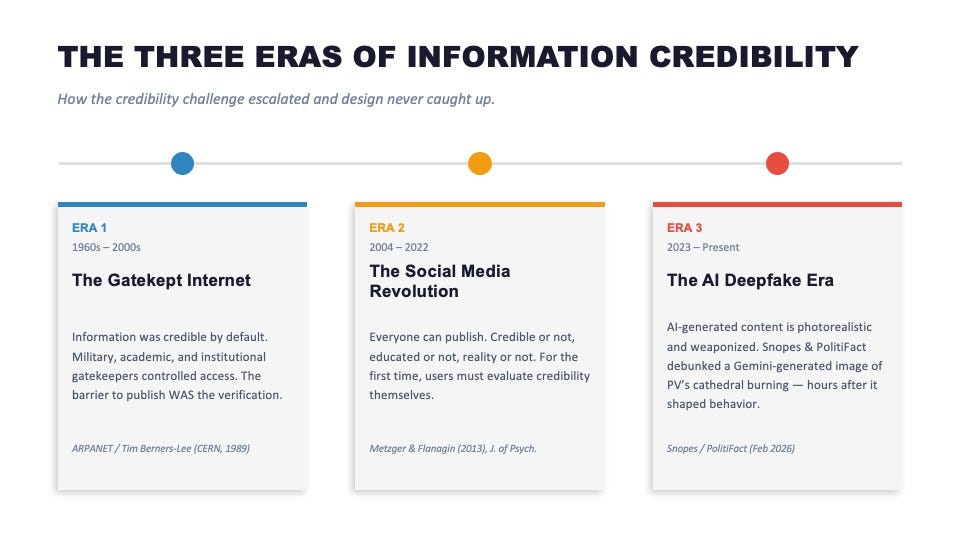

The three eras of information credibility

Era 1: The gatekept internet (1960s to 2000s)

The internet wasn’t built for everyone. It was built for institutions. ARPANET connected universities and military installations. Tim Berners-Lee’s original 1989 proposal at CERN was a document management system for physicists.

The early web was credible by default because the barrier to publishing was the verification. If something was online, it came from a credentialed source. Military. Academic. Government.

Users didn’t need to evaluate credibility. The system did it for them.

This era built an assumption into the fabric of digital products that we’ve never fully shaken: that information, once published, is probably true.

Era 2: The social media revolution (2004 to 2022)

Then everything changed. Facebook launched in 2004. YouTube in 2005. Twitter in 2006. Reddit in 2005. Suddenly, everyone could publish. Credible or not. Educated or not. Reality or not.

For the first time in the history of the internet, the burden of evaluating credibility shifted entirely to the individual user. But nobody designed the tools to help them do it. Research by Metzger and Flanagin showed that users rely on cognitive shortcuts (how professional does the site look? do other people seem to trust it?) rather than actual verification.

By 2016, the consequences were visible to everyone. Allcott and Gentzkow’s analysis of fake news during the U.S. presidential election documented how fabricated stories spread faster and wider than corrections.

The credibility problem is a design failure and not a problem with the technology. We gave everyone a megaphone and nobody a filter. We’re not teaching people how to actually think critically about evaluating what’s true.

Era 3: The AI deepfake era (2023 to present)

Now here we are… AI-generated content is photorealistic, produced in seconds, and the tools to create it are free. The distinction between “real” and “generated” has collapsed for the average person. And during crises, these tools are being weaponized in real time, exacerbating the problem.

The evidence is recent and specific. During a crisis in Mexico in February 2026, an AI-generated image of the Our Lady of Guadalupe cathedral in Puerto Vallarta engulfed in flames went viral across X, WhatsApp, Facebook, and Instagram.

Snopes confirmed it was generated by Google Gemini. PolitiFact rated it fabricated, with Hive AI detecting it as synthetic with 99.9% confidence. Google’s own SynthID watermark was embedded in the image. The Gemini logo was still on it.

None of this information reached the people sharing it in WhatsApp groups while they sheltered in their homes.

Each era created a new credibility challenge. Design never caught up. We’re still building information products on Era 1 assumptions in an Era 3 world.

What crisis information failure looks like from the inside

I live in Puerto Vallarta, Mexico during the winter. It’s my second home. I’ve been coming here for years, originally from the U.S., now based in France. On February 22, 2026, a crisis erupted across the state of Jalisco. I woke up to explosions and smoke rising a block from my apartment.

What followed was a frantic scramble for information that exposed every failure I’ve just described.

WhatsApp became my primary source. People shared real updates: road closures, fire locations, which neighborhoods to avoid. But in the same thread, someone posted a video of a church burning down. A friend called it out as fake. It never happened.

For the minutes before that correction, though, it was real to everyone in the group. Someone else shared a rumor about street violence at noon. No source. No verification. Just a sentence typed into a chat that changed my behavior for hours. I closed my blinds and didn’t open them.

My husband used Grok on X to try to synthesize what was happening. He ran a deep search across 150+ sources and shared the result with our group:

“The rumor is unverified social-media panic with no credible source or backing from authorities or news outlets.”

That actually helped. That was technology doing what it should do in a crisis: separating signal from noise. It was one of the few bright spots of the day.

Then came the moment that still makes me angry.

Someone shared a New York Times link in the group. Finally, a trusted, verified, globally respected source. I clicked it. Paywall. I tried to sign in. Password reset. Two-factor authentication. Redirected. Looped. I did all of this on my phone while checking through the blinds for new columns of smoke.

I gave up and went back to the rumor mill.

The equation should trouble everyone who builds information products: misinformation was free and everywhere. The truth was paywalled and broken. I wrote about this experience in more detail in a previous article, but the short version is this:

The User Experience of finding trustworthy information during a crisis is a nightmare… not because the information doesn’t exist, but because the design fails.

The U.S. State Department posted a shelter-in-place advisory on X at 1:17 p.m. I’d been living the crisis since 8:15 a.m. Five hours of silence from official channels.

That experience left me with something more than frustration. It left me with the urge to create a framework to support this from happening again.

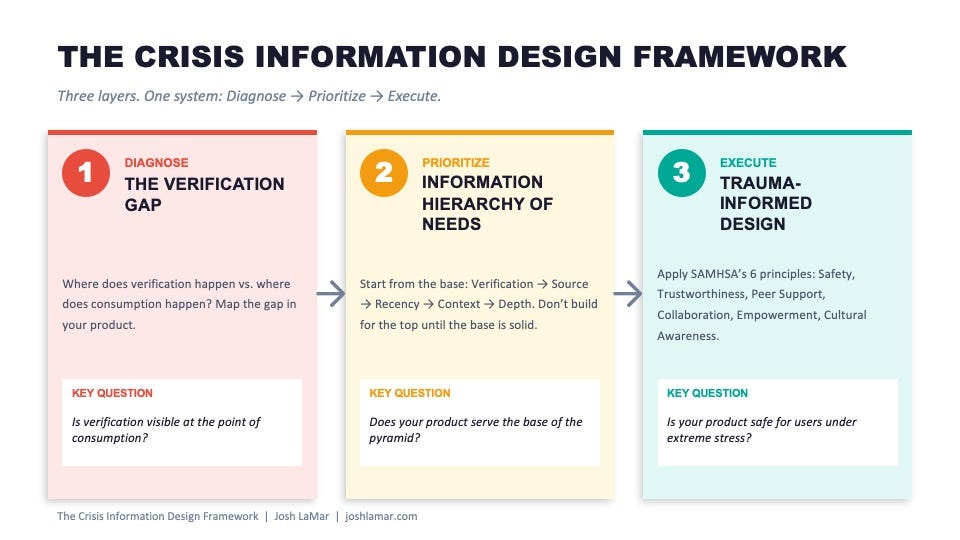

The Crisis Information Design Framework

What I experienced in Puerto Vallarta was three interconnected failures happening simultaneously and I’ve spent the last few days pulling them apart and synthesizing them into a model that designers can actually use.

The Crisis Information Design Framework has three layers. Each layer answers a different question and addresses a different stage of the design process: diagnose, prioritize, execute.

Layer 1: The Verification Gap (Diagnose)

The Verification Gap is the spatial and temporal distance between where people consume information and where verification actually happens.

During the crisis, consumption happened instantly. On WhatsApp, on X, in group chats with shaking hands.

Verification happened hours later, on Snopes, on PolitiFact, through C2PA content credentials analysis, via Hive AI detection.

The gap between those two moments is where misinformation does its damage. People act on unverified information because verified information doesn’t reach them in time, in the same place, or in the same format.

The design question for every product team: is verification visible at the point of consumption? If the answer is no, you have a Verification Gap.

Layer 2: Information Hierarchy of Needs (Prioritize)

When you’re counting the food in your fridge and wondering if your electricity will stay on, Maslow’s hierarchy of needs stops being a framework you learned in school and becomes your new operating system.

The same principle applies to information design. I’m proposing an Information Hierarchy of Needs with five levels, built from the base up:

- VERIFICATION (base): Is this real? Is this verified? Can I act on this safely?

- SOURCE: Where did this come from? Who created it? Are they credible?

- RECENCY: Is this current or outdated? During a crisis, two-hour-old information can be out of date and factually incorrect.

- CONTEXT: What does this mean for me, here, right now?

- DEPTH (top): Full understanding. Nuance. Multiple perspectives.

Most information products today are designed for the top of the pyramid: depth, context, nuance. Almost none address the base. You can’t build for depth when users can’t even tell if what they’re looking at is real.

City Bureau has developed a related “information needs” concept for community journalism, but the application to product design is distinct. The question for designers isn’t, “What stories does this community need?” It’s “Does your product help users verify before they act?”

Layer 3: Trauma-Informed Information Design (Execute)

The third layer addresses how to build it right. SAMHSA’s six principles of trauma-informed care, originally developed for healthcare and social services, translate directly to digital product design during crisis information experiences. I’ve adapted each one for my framework:

- Safety. Verification is visible at the point of consumption. No ambiguity about what’s verified versus what’s not. Don’t autoplay graphic content.

- Trustworthiness. Source provenance is always shown. No dark patterns in authentication flows. No deceptive interstitials during crisis access.

- Peer Support. Community verification layers. Crowd-sourced flagging. Surface it when multiple users flag the same content.

- Collaboration. Platform and official source integration. Pin verified government updates. Partner with fact-checkers in real time.

- Empowerment. User control over information filtering. Agency over what they see. Not algorithmic force-feeding of engagement-optimized fear.

- Cultural Awareness. Multilingual. Locally contextualized. Accessible under stress: larger touch targets, simplified flows, offline capability.

Trauma-informed design has been applied in healthcare and social services for over a decade, guided by SAMHSA’s 2014 framework (Link above) and subsequent implementation research by Menschner and Maul for the Center for Health Care Strategies.

It has not yet been applied to crisis misinformation or information product design. That’s the gap this framework addresses.

Together, these three layers form a complete system:

- Diagnose the Verification Gap

- Prioritize using the Information Hierarchy of Needs

- Execute using Trauma-Informed Design principles

What exists today, and what’s missing

It’s worth acknowledging the work that’s already underway.

The Coalition for Content Provenance and Authenticity (C2PA) has built a content credentials standard backed by Adobe, Microsoft, Google, and the BBC. It attaches origin metadata to images so provenance can be verified.

Google’s SynthID embeds imperceptible watermarks into AI-generated content.

Hive Moderation provides AI detection tools that can identify synthetic content with high confidence. The technology exists.

On the policy side, the EU Digital Services Act includes a crisis-response mechanism (Article 36) that requires platforms to take “proportionate and effective” measures during emergencies. It was first activated during the Israel-Hamas conflict in 2023.

X’s Community Notes provides crowd-sourced context on posts.

Google’s Fact Check Tools API lets developers surface fact-checks programmatically.

So the building blocks are there. What’s missing is the design layer that connects them to users at the moment they need them.

No messaging app (WhatsApp, Signal, Telegram) has a crisis verification mode. No platform surfaces C2PA or SynthID metadata to end users in a meaningful way. No emergency paywall protocol is industry-standard. Some outlets dropped paywalls during COVID, including the New York Times, the Wall Street Journal, and The Atlantic, but it was ad hoc, not systematic.

And the fundamental architecture of verification remains broken: the tools that can tell you whether something is real exist on entirely different platforms from the ones where you encounter the content.

That’s the Verification Gap, operating at an industry level.

What designers and product teams can do

For messaging platforms (WhatsApp, Signal, Telegram)

Build a crisis mode. When a region-specific crisis is declared, enable verified source badges, rumor-flagging, and pinned official updates within group chats. Surface SynthID and C2PA metadata inline.

If an image has an AI watermark, show it before users share.

Let users flag content as unverified and surface flag counts to group members.

For news organizations

Implement emergency open-access protocols. Automatic paywall drops during declared emergencies within affected regions. Geofenced, time-limited, automated.

And PLEASE: no sign-in loops, no credit card prompts, no redirect chains during a crisis!!!

If someone is in a crisis zone, remove every barrier between them and verified information. The sign-in experience I had with the New York Times while smoke was visible from my window should never happen to anyone.

For social platforms (X, Facebook, Reddit, Instagram)

Build verification-first feeds during crises. When a crisis is detected, re-rank feeds to prioritize verified sources over engagement metrics. Before users share flagged AI-generated content, show a verification prompt: “This image may be AI-generated. View analysis. Share anyway.” That single interstitial could have prevented the cathedral image from going viral.

For every product that asks users to trust information

Apply the Information Hierarchy of Needs as a design audit. Does your product serve the base of the pyramid before the top? Apply Trauma-Informed Design principles. Is your product safe for someone whose hands are shaking, who hasn’t slept, who is making survival decisions based on what they see on their screen?

If you’re not sure, the answer is probably no. And that’s where the work starts.

The crisis I experienced wasn’t unique. Crises are accelerating: climate disasters, political instability, public health emergencies. Every one of them will be accompanied by an information crisis. And every information crisis will now include AI-generated content designed to confuse, inflame, and manipulate.

The technology to solve this exists. C2PA can prove provenance. SynthID can detect AI content. Fact-checkers can verify claims. The problem isn’t capability. It’s design. None of these tools reach users at the moment they need them most.

This is our problem. And designers are the ones who can solve it.

The Crisis Information Design Framework is a lens that can be applied to any product that asks users to trust what they’re seeing. Because in a crisis, the UX of information isn’t about a feature, it’s literally about survival.

Josh LaMar is CEO of Amplinate, where he advises on product growth and AI decision strategy. Over 20 years, he has spent 40,000+ hours listening to customers across 19 countries on five continents. He lives between Puerto Vallarta, Mexico and Paris, France.

Download the one-page Crisis Information Design Framework cheat sheet at joshlamar.com.

References

Academic Research

- DOI: 10.1073/pnas.2120481119

- DOI: 10.1126/science.aap9559

- DOI: 10.1016/j.pragma.2013.07.012

- DOI: 10.1257/jep.31.2.211

Institutional & Government

- Trauma-Informed Care

- Center for Health Care Strategies

- The Digital Services Act

- Information Management: A Proposal

- A Brief History of the Internet

Fact-Checks (February 2026)

Technology & Standards

- c2pa.org

- deepmind.google/technologies/synthid

- hivemoderation.com

- toolbox.google.com/factcheck

- communitynotes.x.com

Journalism & Industry

- Reuters Institute

- Major Publishers Take Down Paywalls for Coronavirus Coverage

- Despite relaxing paywalls, publishers like Bloomberg, WSJ and The Atlantic see subscription spikes

- citybureau.org

- Is Your Journalism a Luxury or Necessity?

The UX of survival in the age of AI deepfakes was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.